Protecting the AI/ML Pipeline

The AI/ML lifecycle—from training data to deployment—introduces entirely new attack vectors. Our services secure your models against adversarial attacks, data poisoning, and model theft, ensuring the trustworthiness and integrity of your intelligent systems.

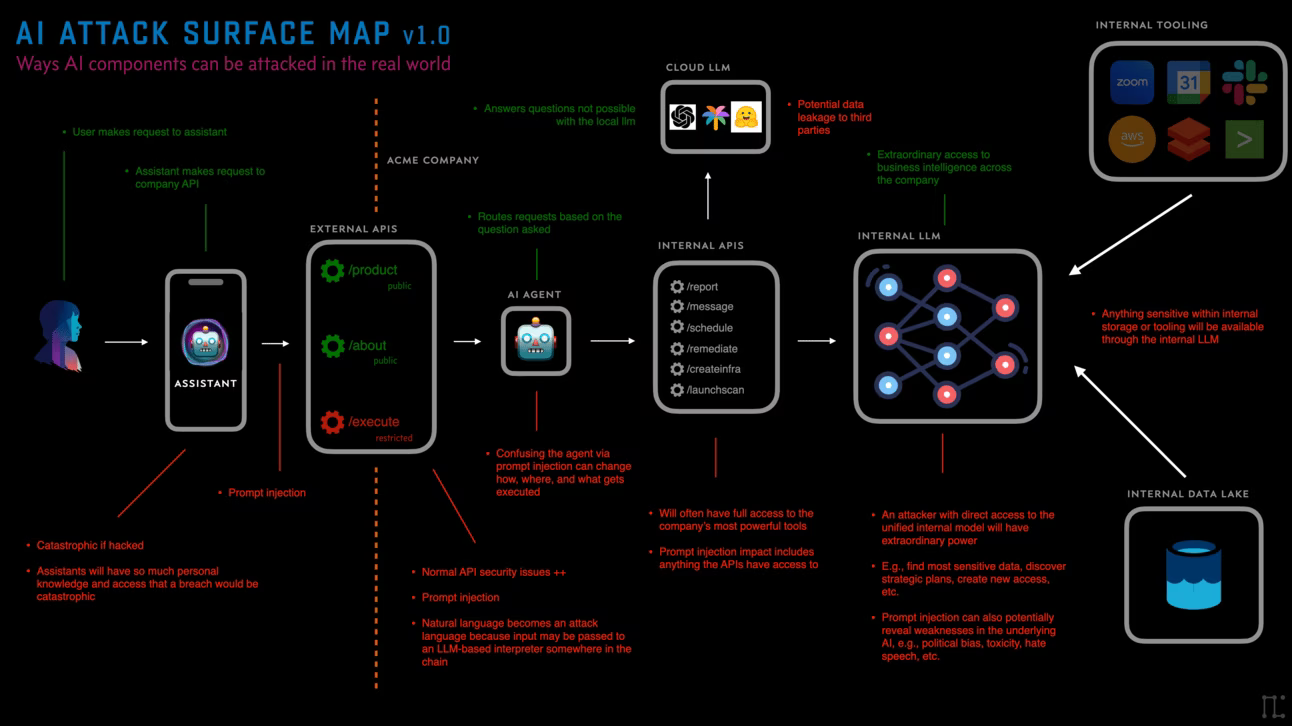

The AI Attack Surface: Adversarial Threats

Attackers are now targeting the logic and data of AI models, not just the underlying infrastructure. We focus on detecting and mitigating three main classes of threats that exploit model vulnerabilities, leading to biased results, performance degradation, or compromise.

- Data Poisoning: Tampering with training data to degrade the model.

- Model Inversion: Extracting sensitive training data from the deployed model.

Our Three Pillars of AI Defense

1. Training Data Integrity

Ensuring the supply chain of your training data is immutable and authenticated, preventing data poisoning and unauthorized manipulation of inputs.

2. Model & Runtime Protection

Monitoring model APIs for suspicious query patterns indicative of adversarial attacks, prompt injection (for LLMs), or attempts at model stealing.

3. MLOps Pipeline Security

Integrating security controls into the MLOps process (CI/CD), securing containerized environments, and enforcing least privilege access for model training infrastructure.